Article

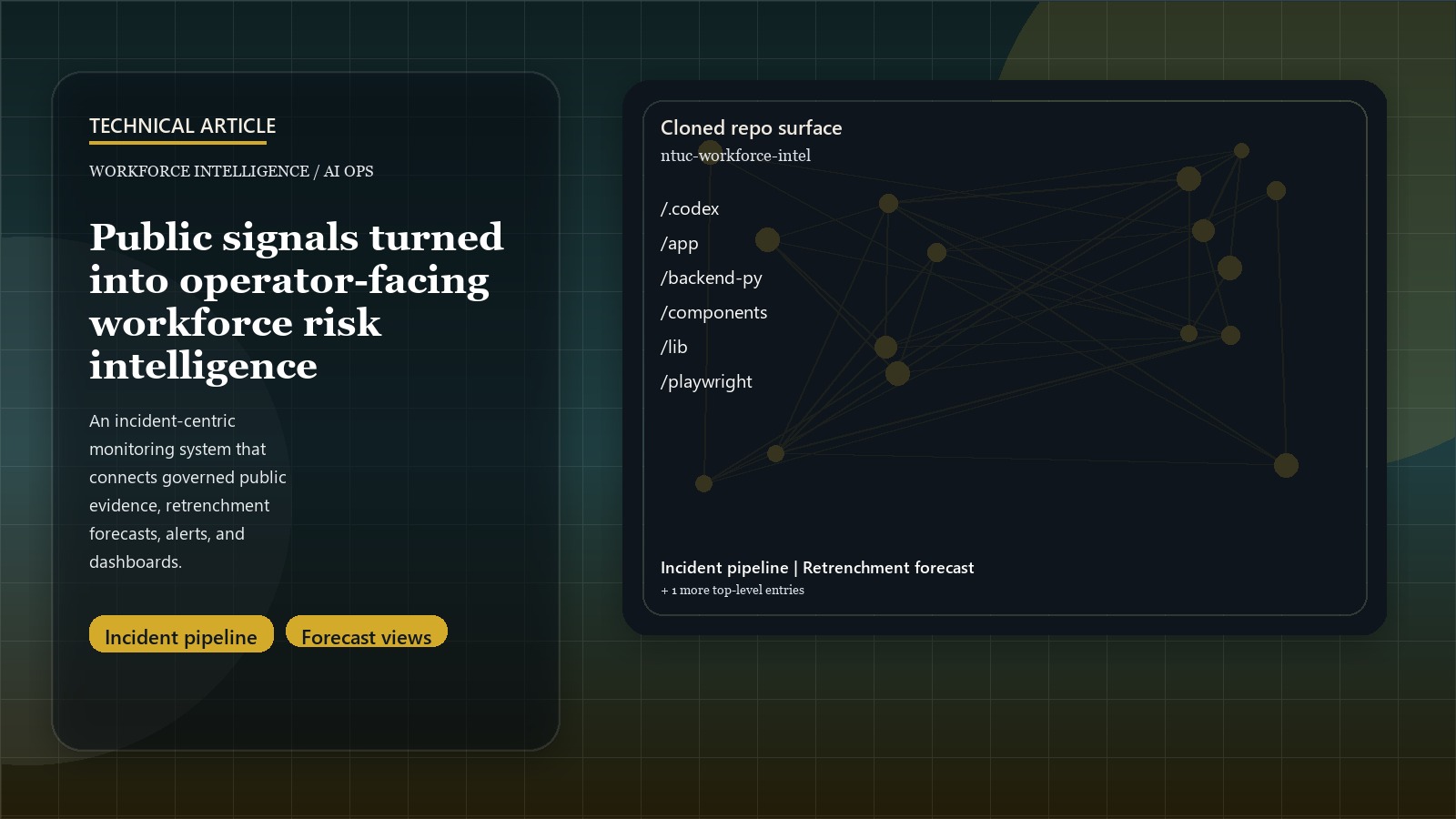

Building Operational Workforce Risk Intelligence from Public Signals

Introduction: Public signals are only useful once they become incidents

Workforce-risk monitoring tends to start as a scraping problem and then gets stuck there. Teams pull news, social posts, company pages, or market feeds, but what operators actually need is not a pile of source rows. They need something closer to an incident system: a way to see what happened, why it matters, how reliable the evidence is, and whether risk is getting worse.

That is the frame that made this prototype interesting. The hard part was not “can we collect public data?” The hard part was how to turn governed public signals into reviewable workforce intelligence with forecasts, alerts, and dashboard surfaces that an analyst could actually use.

Related: for the shorter case-study version, see the Workforce Risk Intelligence project page.

The first architectural decision was to stop thinking in rows

One of the best design choices in the repo is the move away from raw-source thinking. Instead of treating each scrape result as the product unit, the system promotes a more useful structure:

- documents as normalized evidence items

- incidents as clustered workforce events

- company or industry risk snapshots as operator-facing state

That sounds simple, but it changes the product completely. Once incidents become the unit of analysis, the rest of the workflow starts to make sense: deduplication matters, scoring becomes comparable over time, alerts can be tied to persistent entities, and summaries can be grounded in evidence rather than ad hoc prompt context.

This is also the right move for a public-signal workflow. The same event often appears as rewrites, reposts, company statements, social commentary, and secondary reporting. If the system stays at the row level, it inflates noise. If it clusters toward incidents, the operator gets something reviewable.

The BFF boundary matters more than it first appears

The frontend is built with Next.js, but the more important product decision is the same-origin API boundary. The UI does not call the Python backend directly. It goes through a controlled proxy layer that handles header injection, auth behavior, and upstream failure shaping.

That is the sort of choice that separates a prototype from a delivery-ready internal tool. Once the product is meant to sit behind login gates and operator workflows, request shaping and failure behavior become part of the architecture. The same repo also hardens this further with:

- bounded concurrency for scrape and hypothesis paths

- admin-key gates on mutating routes

- timeout controls

- SSRF guardrails on source URLs

- sanitized 5xx behavior and debug controls

None of that changes the core ML story, but it changes whether the system can survive real operator use.

Forecasting works better when it sits next to evidence, not apart from it

A lot of analytics systems separate prediction from monitoring. This prototype moves the other way. The retrenchment module is additive to the public-signal pipeline, so forecasts, company alerts, and historical evidence live in one workflow.

That creates a better product shape for two reasons:

- it keeps prediction tied to observable evidence rather than abstract model output

- it lets the operator move from industry trend, to company risk, to recent source material without changing tools

The repo’s roadmap and implementation notes make this especially clear. The system is designed as a layered intelligence surface:

- industry-level outlooks and six-month forecasts

- company-level early warning over shorter windows

- evidence views and replay artifacts for what drove the score

That is a stronger product than “forecast page over here, scraping page over there.” It makes the prediction explainable by adjacency.

Provider migration is implementation detail, not the product story

The latest repo drift updates the direct OpenAI path to the Responses API and refreshes model defaults. That matters operationally because provider wiring, request metadata, and tests need to keep pace with current API behavior. It does not change the public product claim.

The stable claim is that model calls sit behind a service boundary and observability layer. Summaries and hypotheses are support surfaces for stored evidence, incidents, forecasts, and operator review. The provider can change without changing the product architecture.

Observability and replay are what make the pipeline trustworthy

Another strong choice is that the repo treats observability as part of the product, not just infrastructure. Langfuse tracing is wired into request paths, provider calls, and pipeline evaluation. Replay and experiment harnesses are part of the system shape.

That matters because intelligence workflows are difficult to trust if they cannot be inspected after the fact. A reviewer needs to answer questions like:

- which source family drove the incident?

- what changed between two runs?

- did the score move because the evidence changed or because the logic changed?

- are the generated summaries grounded in the stored evidence?

Without traces and replay artifacts, those questions become manual debugging. With them, the system becomes reviewable in the way an internal decision-support product needs to be.

The broader lesson: risk intelligence is a product architecture problem

The public-facing summary of this work is a workforce intelligence prototype built on Next.js, FastAPI, Postgres, and Langfuse. That is true, but incomplete.

The deeper lesson is that this kind of product is mostly about choosing the right system boundaries:

- incidents instead of rows

- governed evidence before narration

- dashboards tied to replayable pipeline state

- forecasts placed beside evidence, not detached from it

- operator review as a first-class design target

That is the part worth carrying forward. Public-signal monitoring only becomes useful when the product is designed around what an analyst or policy team can review, not around what a scraper can collect.