Article

Designing Creator AI as a Backend-First Content Generation Platform

Introduction: generation quality is not the whole product

It is easy to evaluate an AI content-generation system by looking only at the draft it produces. That is also the fastest way to miss the harder engineering problem.

For a learning-content platform, the real question is not “can a model produce a plausible course or quiz?” The real question is whether the surrounding workflow can make that generation reproducible, reviewable, grounded, and safe to use across different delivery contexts.

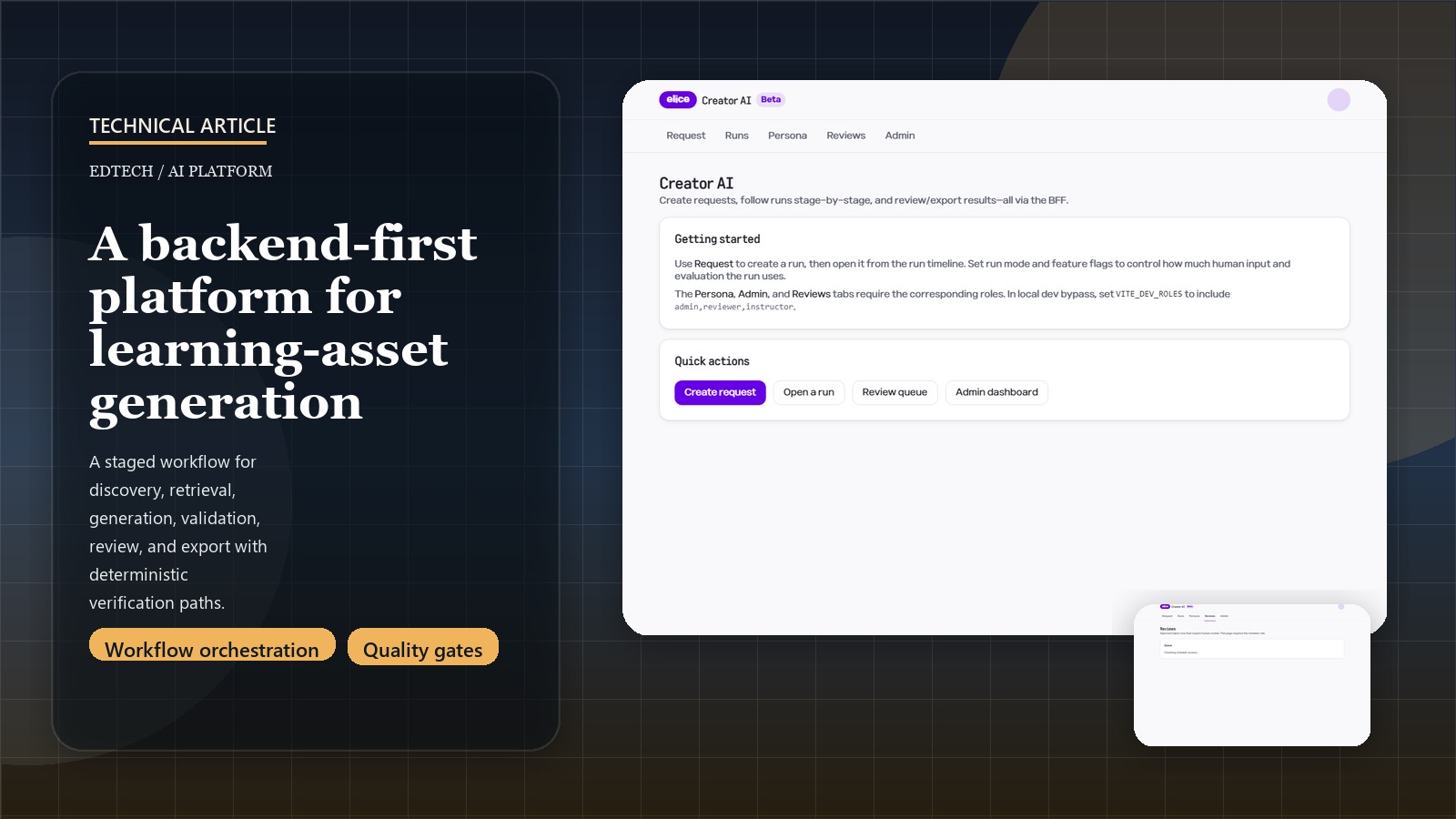

Creator AI is interesting because it is built around that second question. The platform is not just a prompt wrapper. It is a staged system with explicit workflow boundaries across gate, discovery, strategy, retrieval, generation, validation, feedback, and export.

Related: for the shorter case-study version, see the Creator AI project page.

Making the backend the product was the right first decision

The repo describes itself as backend-first and API-first, and that is the correct framing. The frontend exists, but it is intentionally thin. The system of record is the orchestrated backend: runs, tasks, artifacts, events, state transitions, eval outputs, and workflow invariants.

That matters because content-generation systems quickly outgrow “submit prompt, render result” UX patterns. Once a product needs:

- structured intake

- retrieval and standards grounding

- human review queues

- export packaging

- validation and gating

- reproducible prompt lineage

the workflow engine becomes more important than the surface UI.

Creator AI reflects that clearly. The BFF is a facade, not the logic center. The backend owns the contract and the execution discipline.

Discovery lock is a better control point than downstream patching

One of the strongest design choices in the platform is treating Discovery lock as an integrity boundary. The workflow does not simply allow incomplete context to flow downstream and hope that validation or review will catch it later. It explicitly blocks or routes to waiting_human when the brief is incomplete or uncertainty remains too high.

That is a better product behavior for two reasons:

- it keeps uncertainty visible at the right stage

- it prevents downstream artifacts from looking more definitive than the inputs justify

This is the kind of choice that often gets skipped in LLM systems because it slows the happy path. In practice, it makes the system more trustworthy. Human review becomes a deliberate boundary instead of a vague fallback.

Contract-first schemas and verification are doing real work here

Another thing this repo gets right is that contracts are not just documentation. Shared schemas, OpenAPI snapshots, verification matrices, and deterministic proof paths are part of the operating model.

That is especially valuable in a multi-service AI system. Without it, drift shows up everywhere:

- frontend assumptions stop matching backend artifacts

- prompt payloads change without corresponding validator updates

- replay becomes unreliable

- exports and downstream integrations become brittle

The repo’s verification matrix is a good signal of intent. It treats prompt-by-prompt or feature-by-feature acceptance as something that can be mapped to deterministic proofs rather than only manual product review. That is the right discipline for a platform with this much workflow branching.

Retrieval, standards, and evaluation are not accessories

A weaker version of this product could have stopped at generation plus light validation. Creator AI goes further by treating standards grounding, curated retrieval corpora, evaluation, and review artifacts as first-class subsystems.

That changes the product from “AI writes content” into “AI runs inside a governed content pipeline.” The system is designed to answer questions such as:

- what standards or examples informed this output?

- which prompt registry version produced it?

- what validations passed or failed?

- what review state is this artifact in?

- can the result be replayed or promoted safely?

Those questions are what enterprise or institutional users actually care about once the novelty of generation wears off.

The platform lesson: orchestration is the real moat

The visible headline for Creator AI is that it generates courses, labs, quizzes, and capstones. The more important engineering lesson is that the platform treats orchestration, governance, and evaluation as the real differentiators.

That shows up in a few concrete places:

- explicit run and task state machines

- durable artifacts and replay

- simulation-mode verification for deterministic testing

- gated review and export flows

- observability and infrastructure hooks that anticipate production deployment

This is the direction I find most interesting in AI platform work. The model matters, but the workflow discipline around the model matters more. That is what turns generation from a demo into a platform.