Project

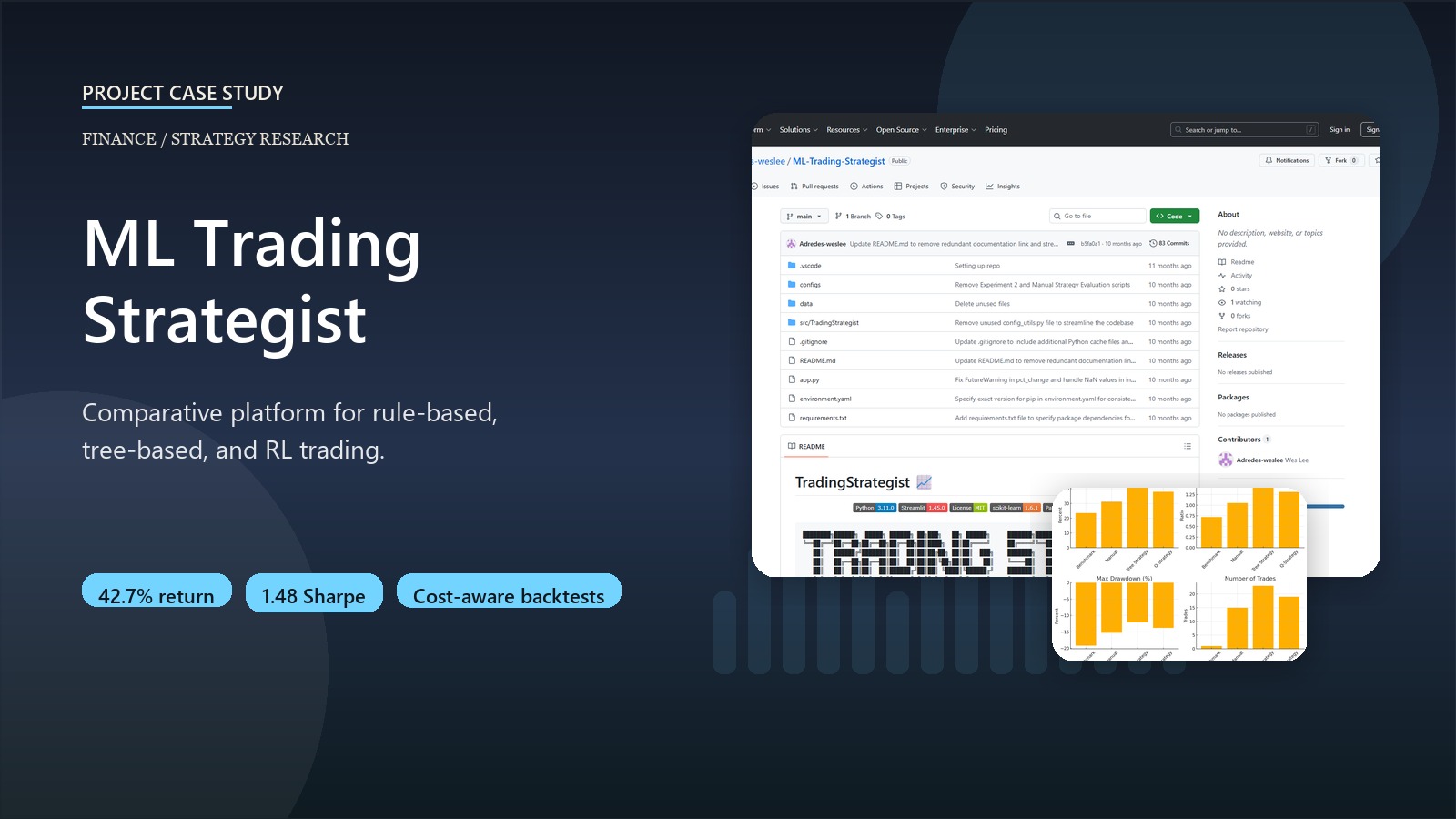

ML Trading Strategist

A comparative trading research platform spanning rule-based, tree-based, and reinforcement learning strategies.

Business context

Most trading projects over-focus on one model family and under-specify execution costs, which makes the comparison look stronger than it is. This project was designed as a research platform for comparing rule-based, supervised, and reinforcement-learning strategies under more realistic backtesting assumptions.

Outcome

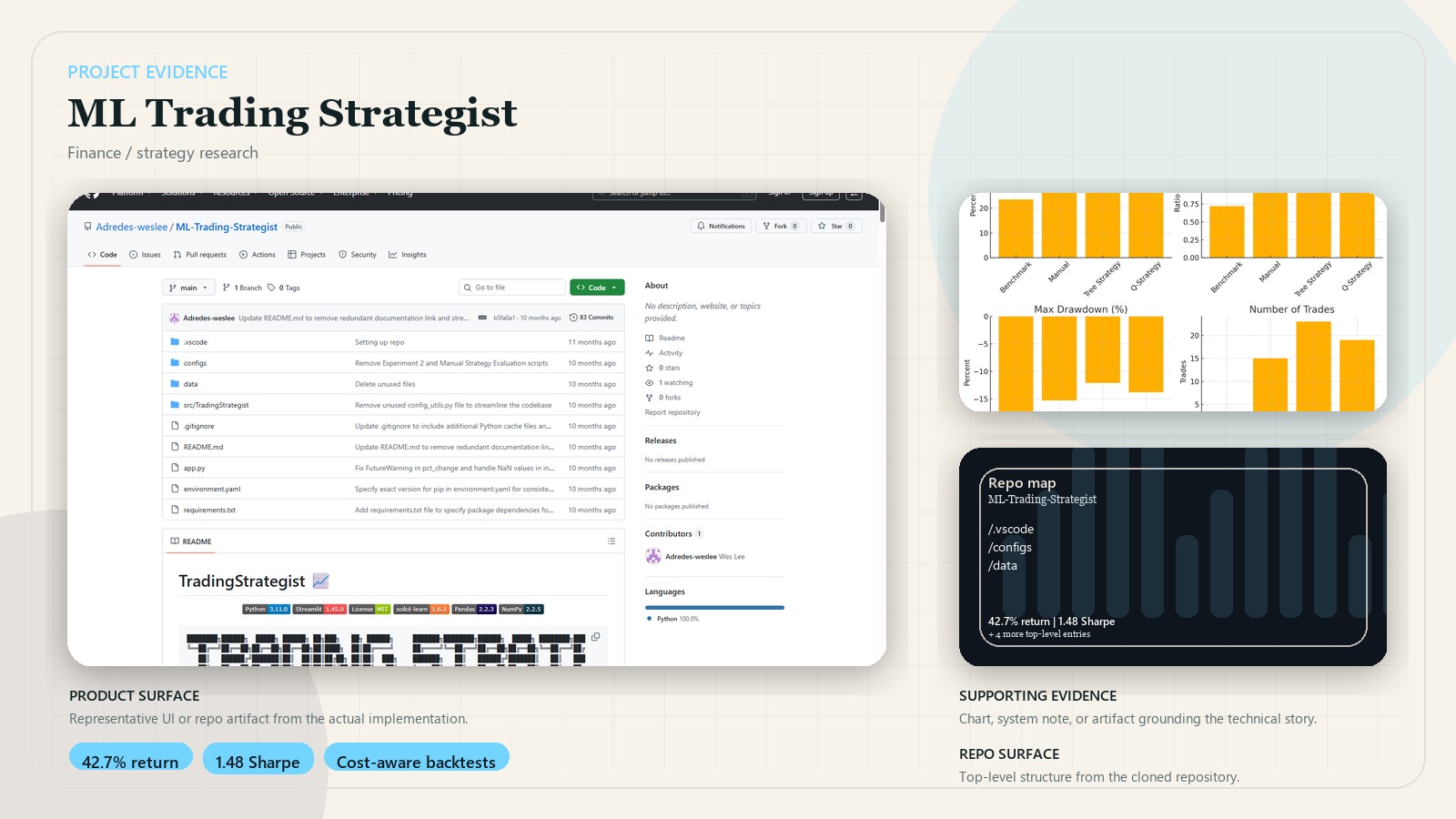

- Supports benchmark, manual, tree-based, and Q-learning strategy families in one framework.

- Includes transaction costs, market impact, and slippage so results are not based on frictionless assumptions.

- In the repo’s JPM backtests, the tree-based strategy reached 42.7% cumulative return with a 1.48 Sharpe ratio.

- Exposes the framework through a Streamlit dashboard for strategy analysis and comparison.

Key decisions

- Benchmarked multiple strategy families rather than optimizing only one approach.

- Modeled commission, impact, and portfolio constraints to keep the research closer to live trading conditions.

- Used YAML configuration so experiments remain reproducible and comparable.

- Built extensibility into indicators, strategies, and simulation components instead of hardcoding one workflow.

System design

Market data flows through preprocessing and technical-indicator pipelines, then into manual, tree-based, or Q-learning strategy modules. Generated trades are evaluated by a market simulator with cost modeling, and the dashboard surfaces comparative performance metrics and strategy behavior.

Stack

- Python, pandas, NumPy, scikit-learn, and YAML-driven configuration

- Custom indicator library, strategy modules, and cost-aware market simulation

- Streamlit for interactive experiment review