Singapore-based AI engineer | AI platforms | evaluation systems

Wes Lee

Builds backend-first AI platforms, multimodal evaluation systems, and operator-facing decision tools.

Currently AI Engineer and ASEAN Education Program Director at Elice. This site focuses on inspectable systems and selected private-code case studies across retrieval, evaluation, finance, forecasting, and decision support.

- AI platforms

- Evaluation systems

- Retrieval and orchestration

- Operator-facing products

Flagship case studies

Start with four flagship case studies that best represent the portfolio.

This set is intentionally small: the clearest signal for platform architecture, evaluation rigor, and operator-facing product execution.

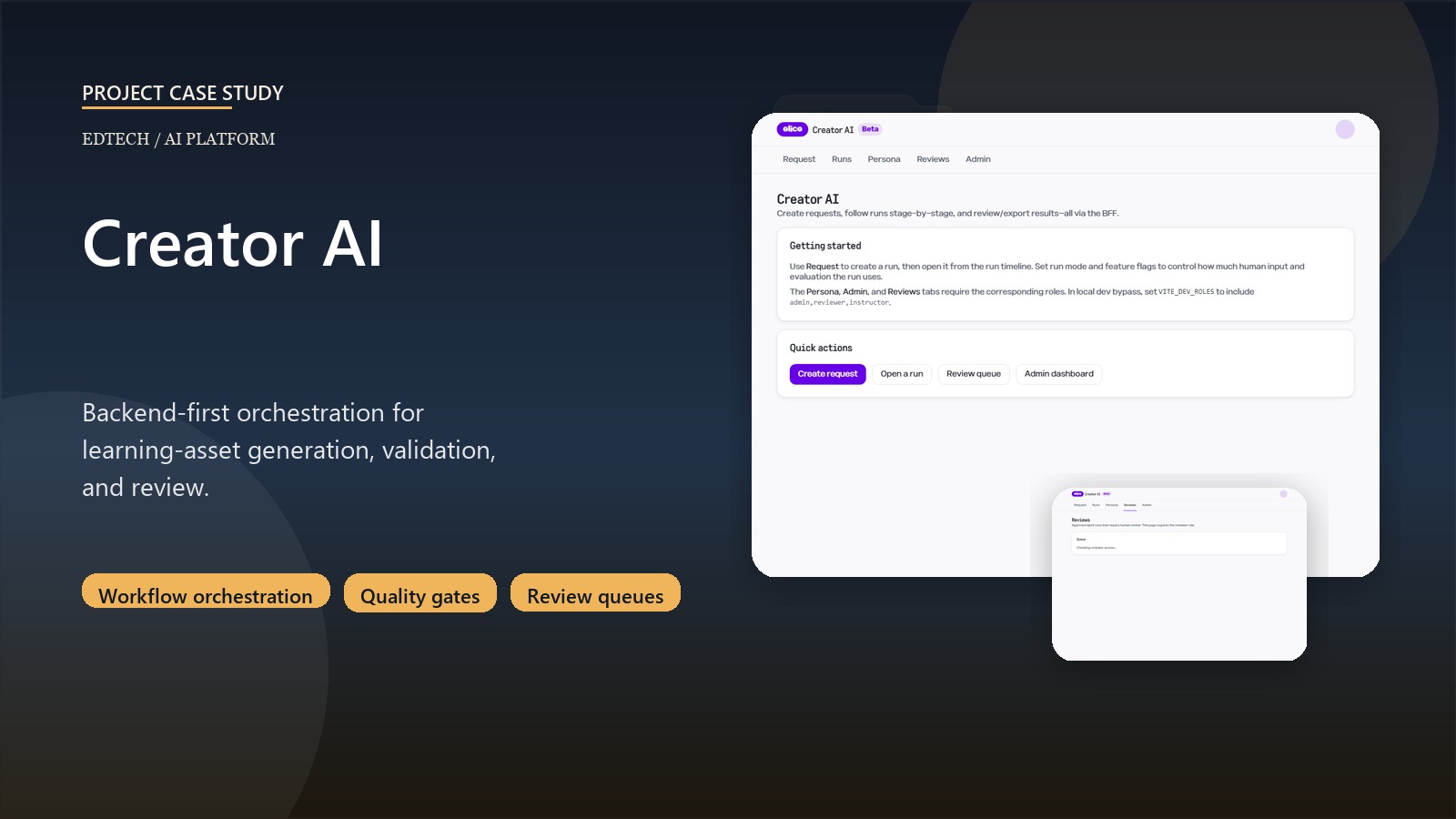

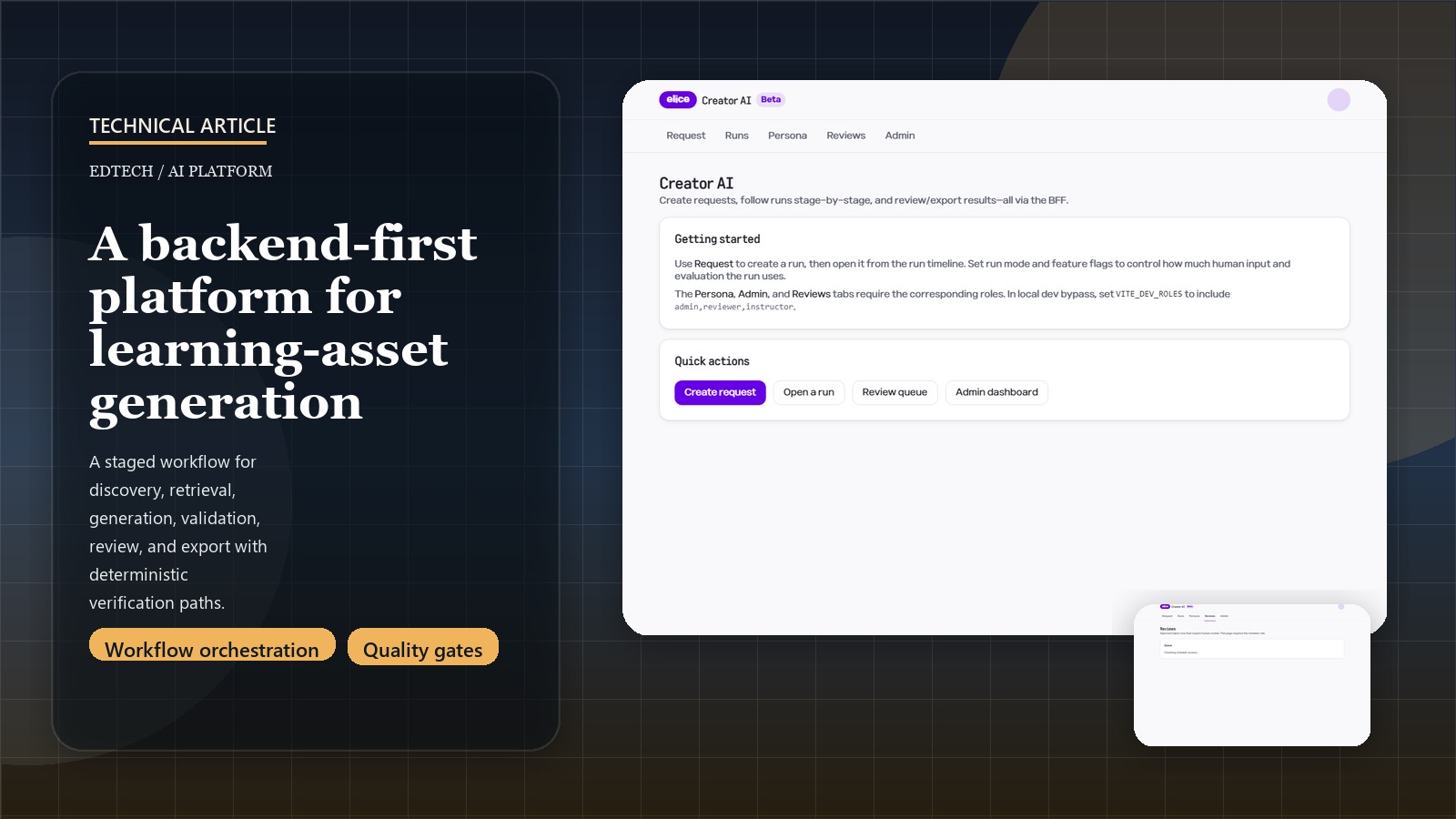

Edtech / AI platform

Creator AI Platform

A backend-first orchestration platform for learning-asset generation with staged discovery, retrieval, validation, and human review built into the product itself.

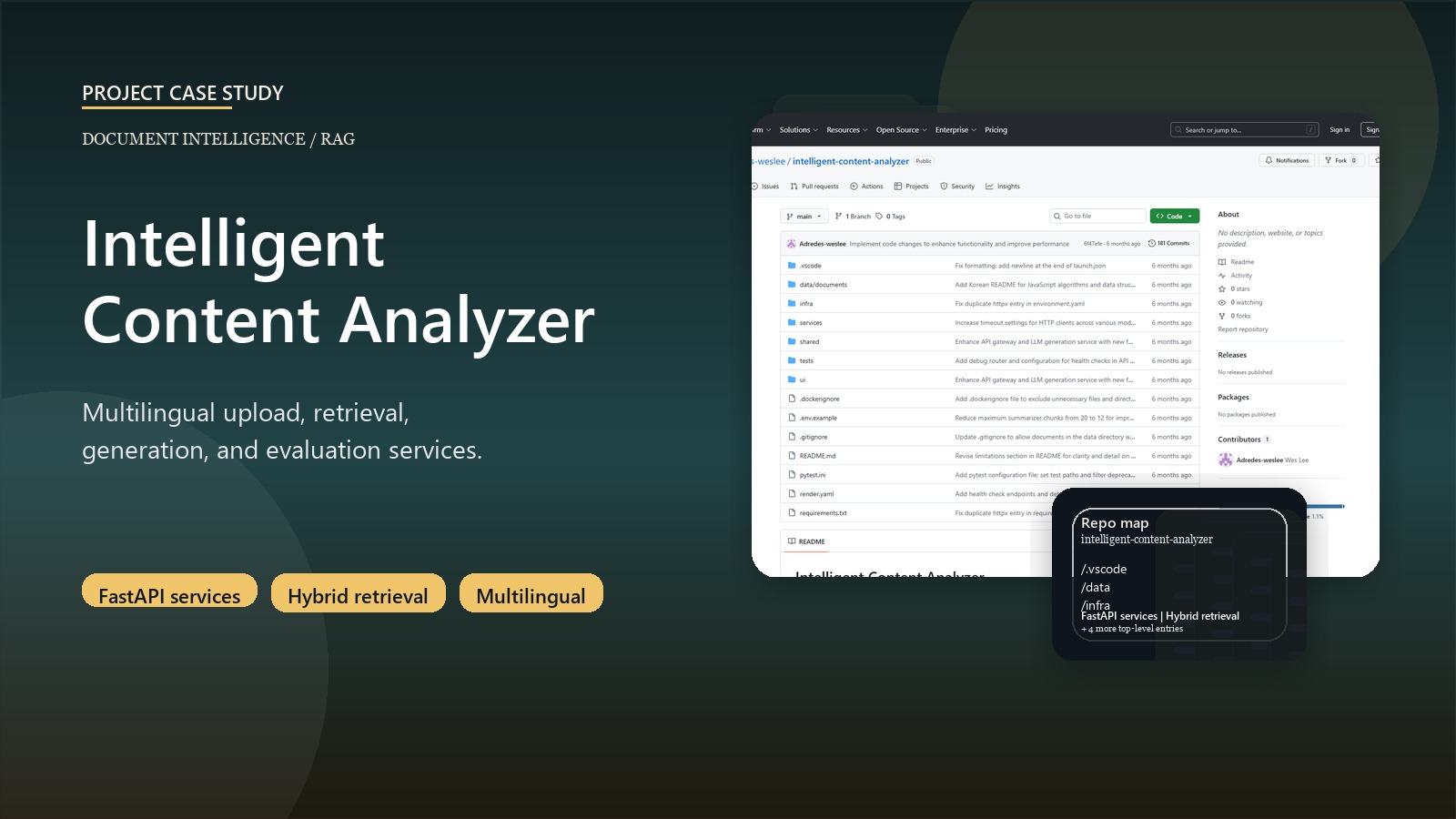

Document intelligence / service architecture

Intelligent Content Analyzer

A modular document intelligence platform that splits upload, retrieval, generation, and evaluation into services rather than one monolithic demo app.

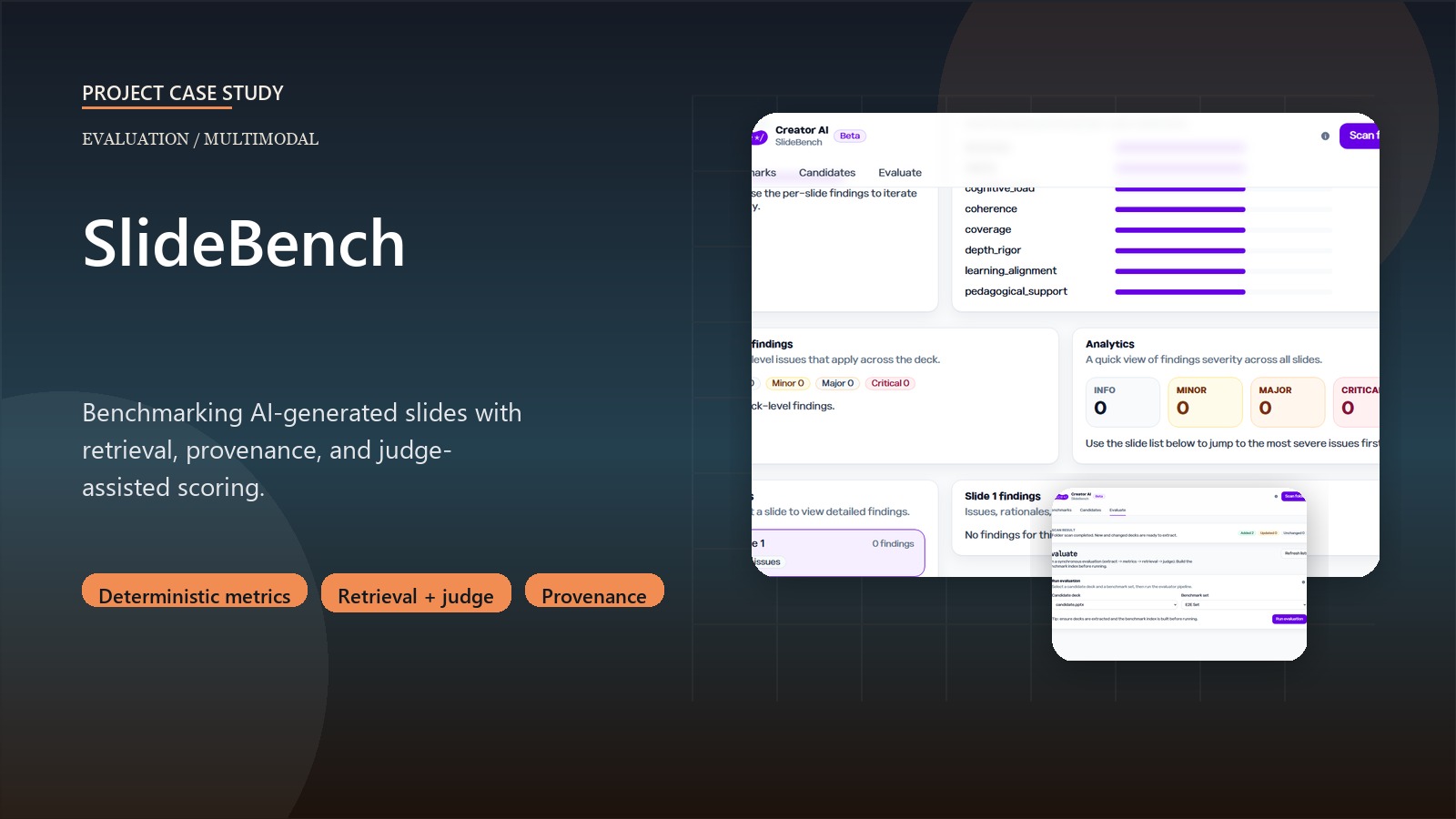

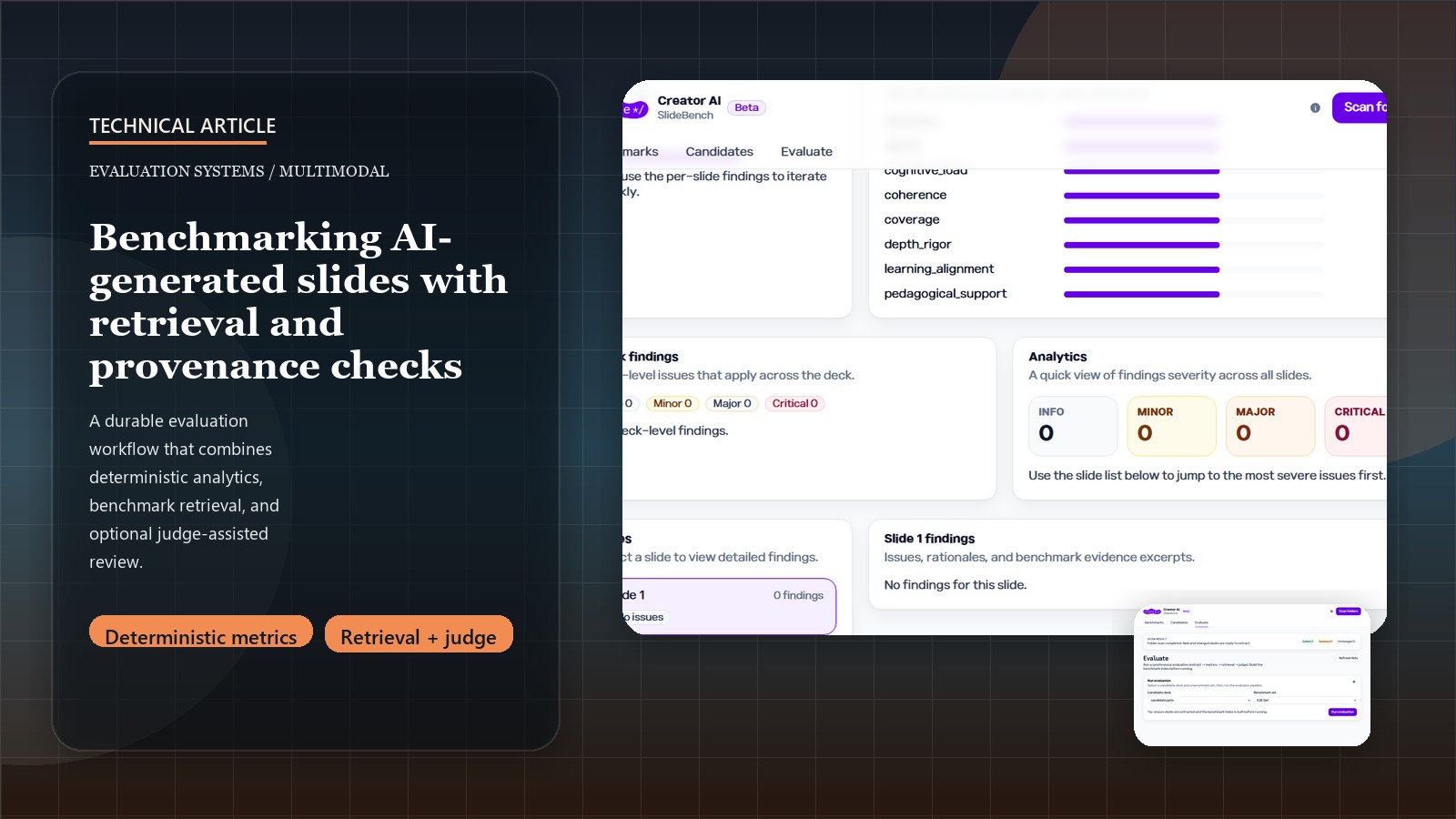

Evaluation systems / multimodal

SlideBench AI Evaluation Workbench

A multimodal evaluation workbench for AI-generated slides and learning artifacts, combining deterministic metrics, retrieval, provenance checks, and judge-assisted scoring.

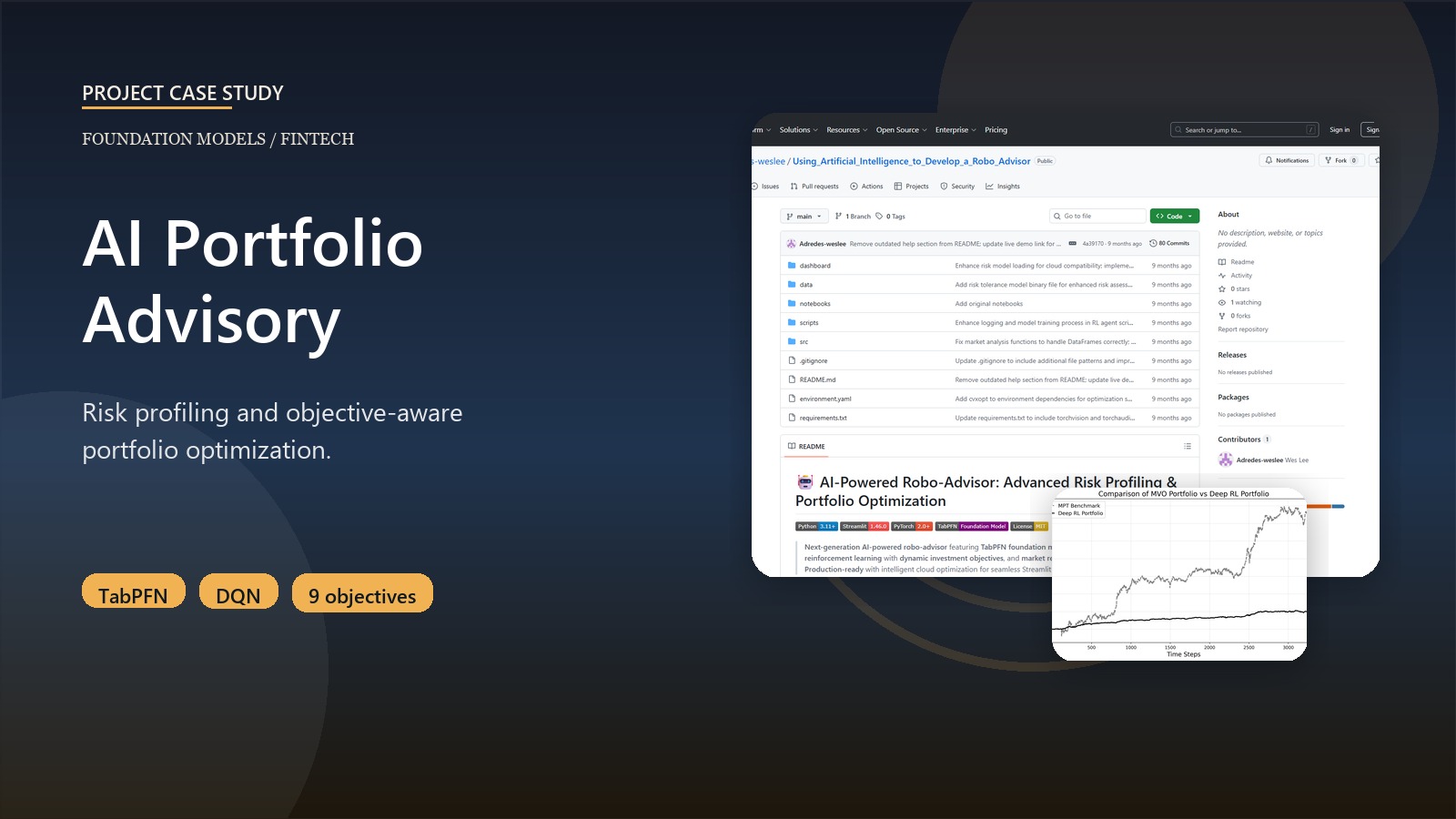

Finance / foundation models

AI Portfolio Advisory System

A robo-advisor platform that applies TabPFN to investor risk assessment and pairs it with objective-aware portfolio optimization.

The supporting layer then broadens into workforce intelligence, prompt optimization, enterprise RAG, policy forecasting, inventory planning, graph ML, climate analytics, and other applied systems.

Current work

Current work centers on orchestration, evaluation, and operator review.

AI platforms

Backend-first systems that connect retrieval, generation, validation, and human-in-the-loop review.

Evaluation and observability

Benchmarking, evidence checks, regression coverage, tracing, and structured scoring for model behavior.

Product interfaces

FastAPI, Streamlit, and internal-tool patterns that give operators usable surfaces instead of opaque models.

Decision domains

Education, workforce intelligence, finance, public health, and business analytics where systems need to drive action.

Recent writing

Writing behind the systems.

Technical notes on implementation choices, evaluation logic, and what changed between experiment and usable product.

Mar 24, 2026

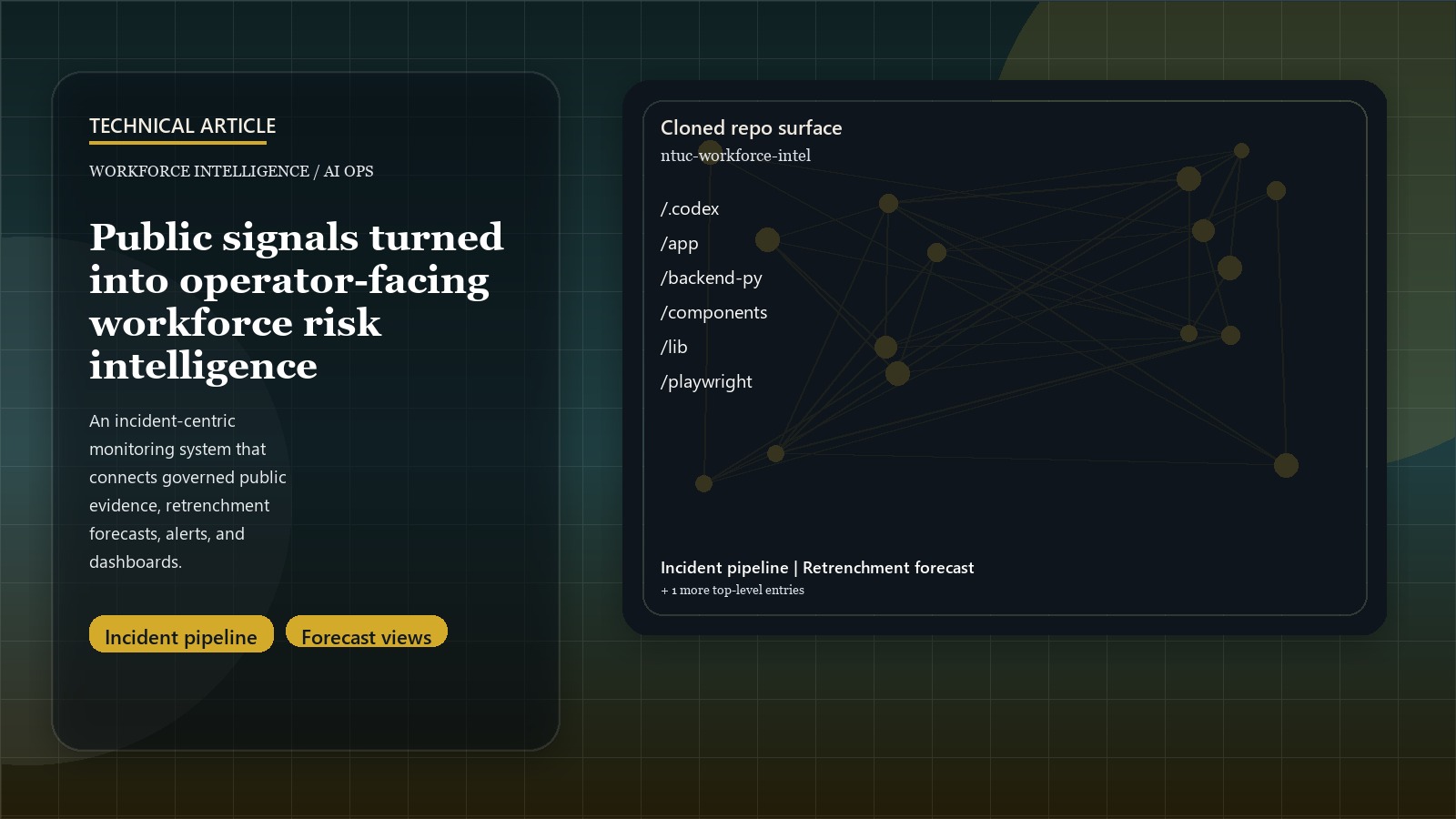

Operational Workforce Risk Intelligence from Public Signals

Why workforce monitoring becomes useful only after public signals are turned into incidents, forecasts, alerts, and reviewable risk views.

Read article

Mar 24, 2026

Designing Creator AI as a Backend-First Platform

Why AI content generation becomes more valuable when orchestration, discovery, validation, and review are part of the product.

Read article

Mar 24, 2026

Building SlideBench for AI-Generated Slides

How deterministic metrics, benchmark retrieval, provenance checks, and judge-assisted scoring combine into one evaluation workflow.

Read articleNext move

Need an engineer who can connect model quality with product execution?

Best fit is backend-first AI platforms, evaluation-heavy systems, and decision-support products moving beyond prototype stage.